Goodhart's Law

Charles Goodhart back in 1975 said:

When a measure becomes a target, it ceases to be a good measure.

So I want to talk about a journey I had where I enforced 100% code coverage on an open source project and started to hate it. So lets roll the clock back a good few months.

I built through work an open source GitHub Action that can be used to collect coverage percent from a Clover export and use that to enforce a specific minimum amount of code coverage. So when I started a new hobby project, I set it up without much configuration like so.

- name: Execute tests (Unit and Feature tests) via PHPUnit

run: php artisan test --parallel -p2 --coverage-clover=output.xml

- name: Code Coverage Check

uses: sourcetoad/phpunit-coverage-action@v1

with:

clover_report_path: output.xml

min_coverage_percent: 50

fail_build_on_under: falseThis above example will take the output of the artisan test command via the --coverage-clover calculate the percentage.

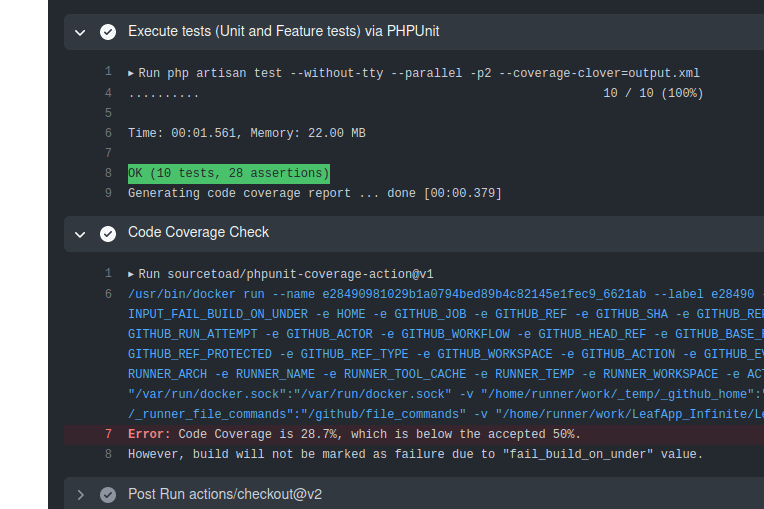

So most build outputs in the first few days of this project looked like:

Which was cool that everything worked out, but a ~30% coverage was not very good, especially for such a small project.

I knew I wasn't testing this new Laravel Livewire system I was using, so I set off through 2 semi-large pull requests (#1 and #2) to add some automated testing.

I was blown away that after just testing my major components, my new code coverage was 81%. So I knew I had to reach for even further.

Prior to that though, I wanted to ensure that no regressions dropped my percent so I changed the above GitHub Action to enforce coverage at 80% or higher and committed it.

This time with all components tested, I needed better insight into what was not covered. So it was time to leverage my IDE (PHPStorm) as well as look into an HTML report.

I wrote up a quick Composer command.

"coverage": [

"@php -dxdebug.mode=coverage ./vendor/bin/phpunit --coverage-html=output"

]so now I was one command - composer coverage away from running my test suite with coverage and built report.

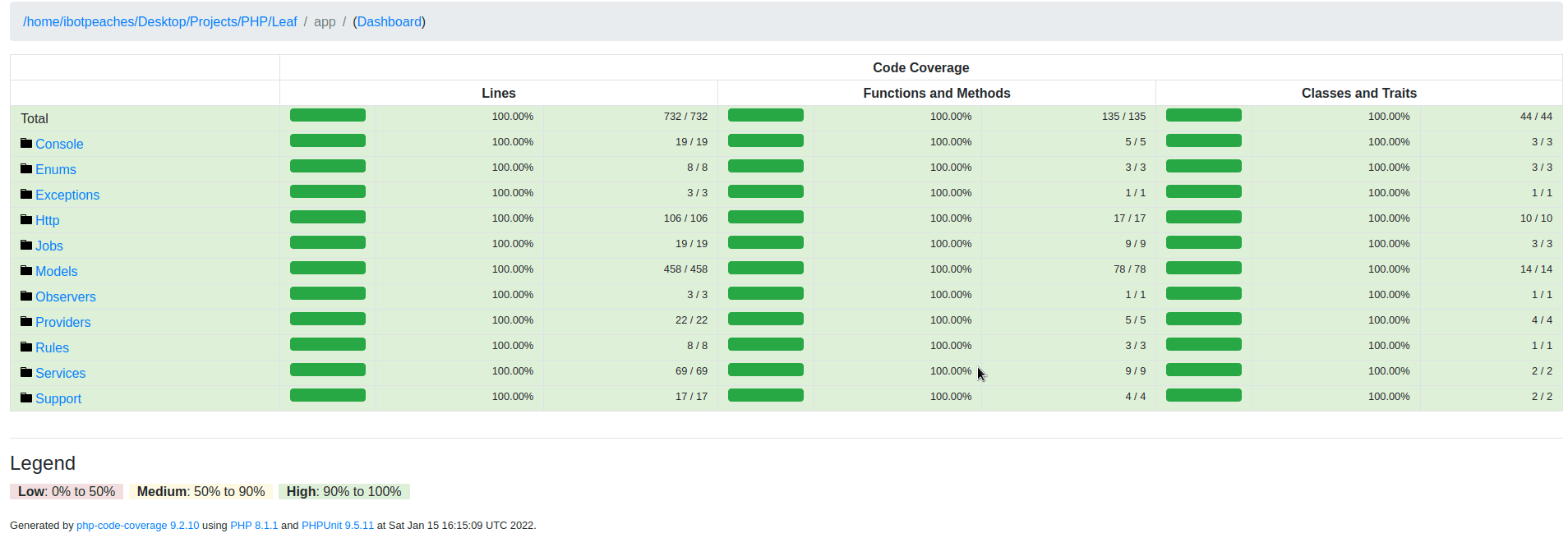

So the HTML report was great and helped me continually push further towards 100%. The interface and report clearly defined which functions and even lines were untouched by tests.

This is where I learned I had to strip out everything from Laravel that I did not use, because that was negatively affecting my code coverage. Additionally, it made clear to me that my console commands had 0 coverage. So this led to the introduction of assertions for console commands and another pull request later and I was inching into the 90% realm of coverage.

Once at 90%+ I realized this was getting difficult to push further. Code had to be refactored to be testable and some functions that had safety nets of return null; had to be tested even if the possibility of null was near impossible.

It became tough, because imagine a function that throws an exception on error. If you try and return a value from a function, that function contract may be array or null, so you naturally have to support both. Hoever, you know chances of a null return without an exception are near impossible, so this leads to very odd test code to be sure all paths are covered.

Though, it also forced myself to write slightly better code. For example, lets take that above example and see how it looks today.

public function match(string $matchUuid): ?Game

{

$response = $this->pendingRequest->get('stats/matches/retrieve', [

'id' => $matchUuid

])->throw();

$data = $response->json();

return Game::fromHaloDotApi((array)Arr::get($data, 'data', []));

}This block of code does the following:

- APIs out to a service, intentionally throwing an exception if any non-20x is returned (Guzzle)

- Attempts to parse the response into JSON (Http Facade)

- Hydrates the response into models, but ensuring an array is passed into the hydration (Eloquent)

- Finally, returning

nullif the hydration could work.

This is easy to test, because:

- An exception bubbles out, thus tests sad path

- A null path is simply mocking a null return on a 200

- A happy path is mocking the real 200 payload.

So after a few changes like that, I was now around 95% with a pull request.

The move from 95% code coverage to 100% coverage, a meer 5% took longer than building the project from nothing to initial deployment and this is no joke.

Enums became a stress point with every possible enumeration needing testing, so I became quite fimiliar with Laravel sequences, so I could simply have generated models in test bounce between a variety of possibilties. Paired together with an amount of iterations equal to the sequences and all possible enums were ran.

Though, I sat at 98% and struggled with the remaining changes which required micro changes to how logic was returned. Pairing fighting against a 100% goal while having linters (PHPStan) also battling my syntax was tough.

Though, I finally did it. One pull request went up setting the coverage to 100%. I quickly learned that Faker randomness can lead to sub 100% coverage so patches kept coming.

Now the project was enforced at 100% and I wanted to work on features. What used to be a fun creation and quick iteration, was now stopped abruptly into every single bit of experimental code was tested.

I can see this argument for mission critical systems or even enterprise, but a little hobby project was draining my energy. Though, I continued to push on with the enforcement for the sake of this eventual blog and being able to proudly say I have an open source Laravel project at 100% code coverage.

So take a look. Chances are we are still at 100% coverage - https://github.com/iBotPeaches/LeafApp_Infinite.