Most of my site requests are bots

A few months ago when Halo Infinite launched - I built a new site that I've blogged about a few times related to the disappointment of the game and how I wired up the CI/CD process. One thing I was quite happy about with this site was the sheer popularity in comparison to previous iterations of this site.

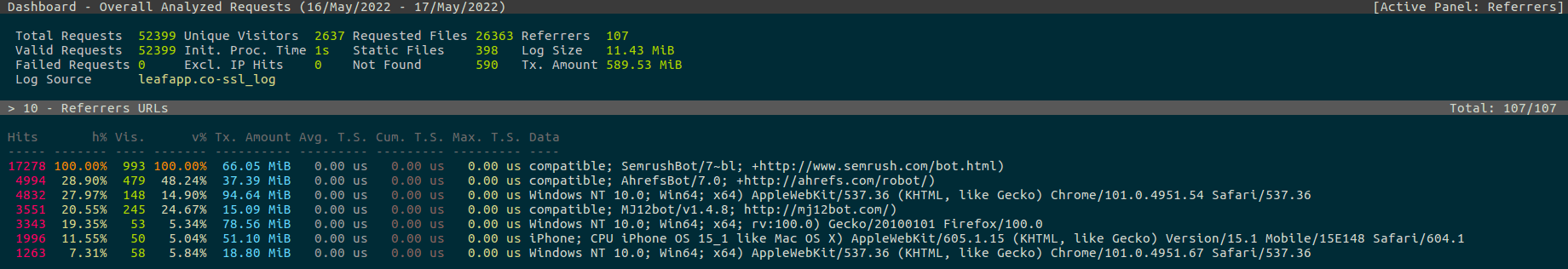

If we look at the Halo 5 iteration of this site - I was tracking probably below 10 unique hits a day. However, I still worked on it and enjoyed building it even if no one was visiting it. The new iteration of this site though was far more popular than the previous - just looking at May 16th data I see 2,600~ unique hits that equal 52,000~ requests.

So while I was investigating some growth concerns - there was a few things I was tracking.

- Failed jobs database table at 3GB (what is failing so often)

- Jobs queue growing to large levels

- Images loading slow from upstream service

- Blocking "bad" bots

I easily tracked down the failed job issue, added additional queue runners for the queue and ended up wiring up an automatic image optimizer and downloader from the upstream service.

All that was left was blocking "bad" bots and I had no idea the large amount of requests these bots do.

Lets look at May 16th, 2022 and look at the top 5 User Agents.

- 18,004 hits - SemrushBot (Bot)

- 5,413 hits - AhrefsBot (Bot)

- 4,832 hits - Mobile iPhone (User)

- 4,020 hits - MJ12Bot (Bot)

- 3,343 hits - Firefox (User)

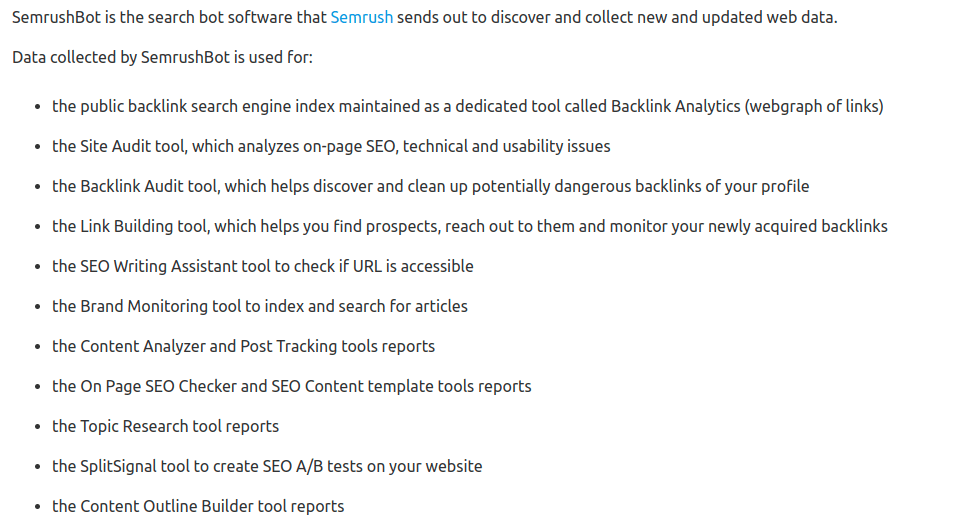

So right of the gate - why does Semrush have so many hits? What could it possibly be doing that is beneficial?

Their website has a large list of things of why this service is needed and what it does. Every single item on this list looks to be related to a true marketing push and research one might need on a site. However, Leaf (leafapp.co) is a free site I make in my hobby time and I don't really need any of that.

Especially when this service just hammers my site non-stop. That led to an easy site block, so now my robots.txt looks a bit different.

User-agent: SemrushBot

Disallow: /

User-agent: MJ12bot

Disallow: /

User-agent: AhrefsBot

Disallow: /So then I got curious and started looking at some of my failed hobby projects that I still host for some reason. All these bots love hammering sites that are dead! I don't get it - the cost and money to spider the website must be pretty cheap for hammering hits to websites I haven't even visited in months.

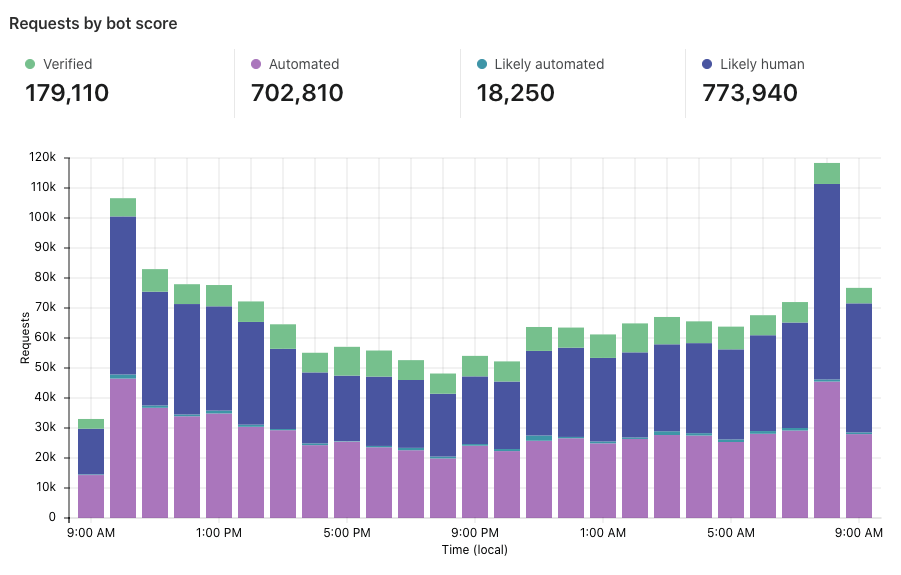

So this just led me on a hypothetical thinking pattern - folks are complaining that Twitter is all bots. I'm complaining that a good portion of my site is all spider hits. I'm just starting to wonder if a majority of web traffic is not real.

This seems plausible when Cloudflare produces blogs with graphs that help enforce this theory at a larger scale.

So bot or not - Leaf continues to be built and a few more bad bots are added to the robots.txt.