Letting Dependabot merge

About a year ago I hit 100% code coverage on an open source project known as Leaf. I blogged about this when I hit that coverage marker and after a year its worked out quite well. Adding new large features is a pain from all the requirements, but little changes here and there I've now got full confidence in whether they regress or break the site.

So one thing I wanted to experiment with is if I have 100% code coverage - why not let Dependabot just merge what it wants?

For those unaware - Dependabot without configuration will present pull requests to your project when it detects a vulnerable dependency. It keeps the change-set small and only updates the lock file and required child dependencies of the affected one.

I wanted to explore how to configure Dependabot to not only update for vulnerable dependencies, but to properly follow semantic versioning and update any package when a new version exists.

So I created a new configuration file in my project at .github/dependabot.yml with the following:

version: 2

updates:

- package-ecosystem: github-actions

directory: /

schedule:

interval: daily

time: "04:00"

timezone: "America/New_York"

- package-ecosystem: npm

directory: /

schedule:

interval: daily

time: "04:00"

timezone: "America/New_York"

- package-ecosystem: composer

directory: /

schedule:

interval: daily

time: "04:00"

timezone: "America/New_York"I opted into three different ecosystems for updates.

- Composer - for the PHP side.

- NPM - for the Node/asset bundling side.

- GitHub Actions - for the CI/CD pipeline.

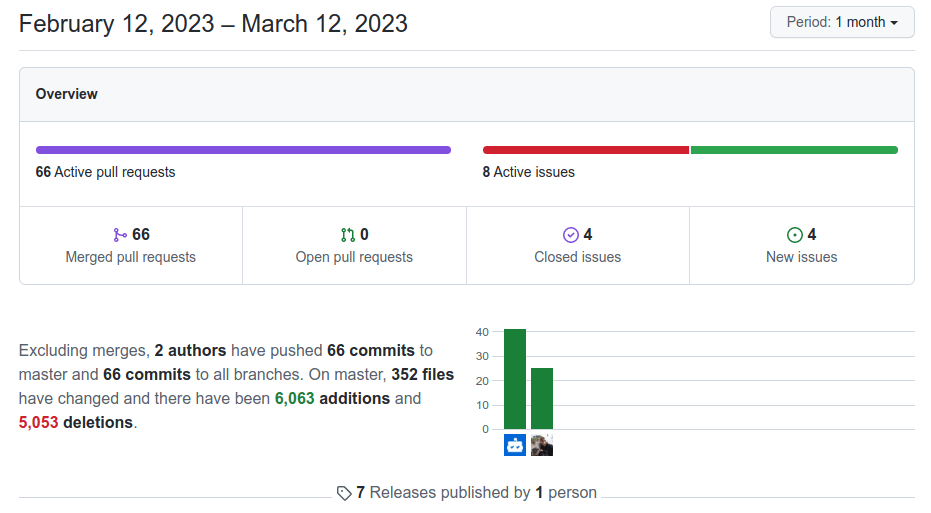

So now daily Dependabot will check if any of my packages have a compatible version update and if so - present an upgrade. After about a month I've collected a mix of feedback.

It doesn't auto-rebase between ecosystems

If we have three pull requests put up and there is one in each ecosystem, the first one will merge and the other two won't automatically rebase because their monitored lock file was not updated.

This just causes me to manually rebase and delays the pure automation of this.

It works too fast against me at times.

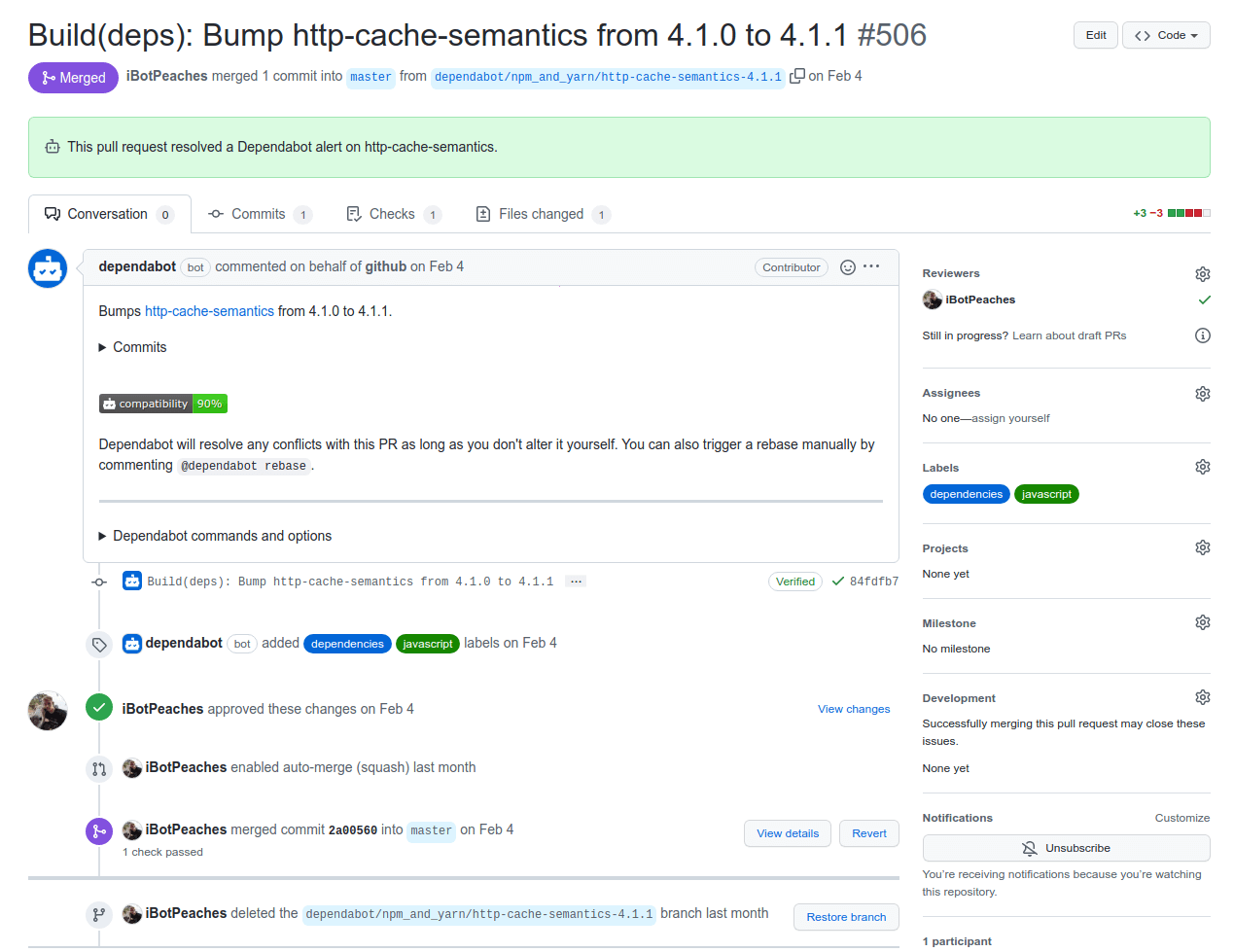

With it running daily - if an upgrade is pulled or a mistake, but still results in a valid pass of test suite - it'll be merged. Take a look at the title of this one bugged merge I got.

Build(deps): Bump laravel/horizon from 5.14.2 to 515.0 #577Clearly this was not meant to be version 515, but instead 5.15. So once that bugged tag was removed and purged - I noticed some struggles to update dependencies as it could not resolve that tag anymore. Tracked it down to a bug and simply manually upgraded to the proper version.

Upgrades are easy

With isolated dependency upgrades it becomes night and day difference to understand which package caused regressions or broke the build. In the past every few weeks I simply ran a upgrade of all packages and that led to stress in identify which package caused failures among them all upgraded.

Now when I have an unmerged pull request - I know a breaking change was encountered and I can triage it as such. This helps keep the project up to date and allows me to move to new major version of say PHP and Laravel without much effort.

Hands off

I wake up and chances are my project has had a few merges already. With automation occurring near daily I know when I sit down to work on a bug or feature, a simple git pull and I can get straight to work.

Additional Vector

However, I understand that giving Dependabot the ability to auto-merge code has opened a new vector. If a dependency updates maliciously I would auto take that upgrade and possibly deploy it to production later on.

I'm not worried about the actual CI/CD vector though. Secrets are only exposed from the context of a deployment and build machines are random GitHub runners.

So take a look at the repository - chances are its still 100% covered with automatic Dependabot merges enabled.